The False Choice We Keep Making About AI

Why "For or Against" is Failing Librarians and Students

Editor’s Note

Over the past few weeks, I have seen Facebook posts and comments circulating that call out other colleagues and me by implication, for engaging with AI. Rather than responding in fragments or comment threads, I want to step back and address the larger issue those posts reveal. This piece is not a rebuttal or a defense of any single tool. It is an attempt to have the conversation our profession keeps circling without fully entering.

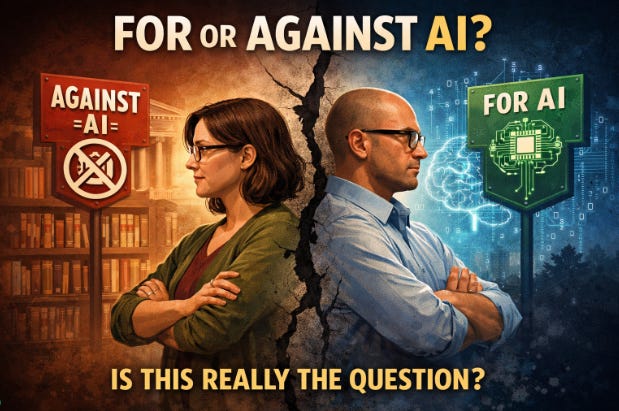

The conversation around artificial intelligence in education and libraries has collapsed into a false choice. You are either for AI or against it. You are either embracing it or resisting it. That framing may feel decisive, but it is deeply misleading.

It is also dangerous.

Refusing to engage with AI does not prevent it from shaping students’ lives, learning, labor, or access to information. It simply ensures those forces operate without guidance, ethics, or shared understanding.

Libraries have never existed to avoid complexity. We exist to help people make sense of it.

A familiar pattern in library history

This moment is not new.

Books themselves were once accused of damaging memory and moral character. Public libraries were criticized for making information too accessible and for lowering intellectual standards. Later, the internet was supposed to make libraries obsolete altogether.

Each time, opting out was framed as protection.

Each time, it failed.

Libraries did not remain relevant by rejecting new information technologies. They remained relevant by teaching people how to use them thoughtfully, critically, and ethically. Information literacy did not emerge because tools were harmless, but because they were powerful.

AI belongs in that lineage.

The uncomfortable reality we need to name

AI is already embedded in daily life.

The platforms where we debate AI use rely on AI systems. The phones, keyboards, cameras, spell checkers, recommendation engines, moderation tools, and accessibility features we use every day are shaped by AI. Saying “I do not use AI” is rarely accurate in any meaningful sense. More often, it means avoiding certain visible or generative uses.

Students, meanwhile, are not waiting for adult consensus. Many middle and high school students have already used generative AI tools, often without guidance and often unsure whether their use is allowed, ethical, or even detectable. At the college level, usage is already commonplace.

When adults opt out of the conversation, students do not stop using AI. They simply stop talking to us about it.

What ignoring AI actually teaches

When librarians and educators refuse to engage, we teach students several lessons, whether we intend to or not.

We teach that powerful information systems are taboo rather than examinable.

We teach that ethics are about abstinence rather than judgment.

We teach that schools and libraries are disconnected from the world students inhabit.

That gap does not protect learners. It erodes trust.

This is also an equity issue

Students with access to thoughtful guidance will learn how to question AI, use it selectively, and understand its limits. Students without that guidance will still use it, but without context, safeguards, or critical framing.

Silence does not create fairness. It has advantages for students who already have support, and leaves others to navigate complex systems alone. Libraries have always existed to narrow that gap, not widen it.

This is not about enthusiasm. It is about responsibility.

There are real concerns about AI. Environmental impact. Labor exploitation. Data privacy. Copyright. Bias. Academic integrity. These concerns are not reasons to disengage. They are reasons to teach them.

Ethical engagement does not mean telling students to use AI. It means teaching them when not to. It means explaining how training data works, why outputs are unreliable, how authorship is complicated, and where refusal is the right choice.

Ethical instruction does not require certainty. It requires engagement, reflection, and a willingness to revise our thinking as the landscape changes.

Yes, there are uses I will not promote. There are tools I will not recommend. There are moments when “do not use this” is the most responsible instruction we can give.

But silence is not ethics. It is withdrawal.

A word about the audience and backlash

If you are reading a newsletter called The AI School Librarian, you are likely already open to learning about these tools. You have probably also experienced pushback for that curiosity. Learning is often mistaken for endorsement. Inquiry is mistaken for recklessness.

That tension is real.

This work lives in an uncomfortable middle space, between uncritical adoption and outright refusal, between hype and fear, between institutional pressure and professional concern.

Holding that space is not easy. It is also not optional.

How we talk to each other matters

One of the most troubling aspects of this moment is not the disagreement itself, but how it is handled.

Public call-outs, screenshots, and pile-ons may feel like accountability, but they rarely lead to better practice. They harden positions and discourage honest questioning. When curiosity is punished, silence becomes the safer choice.

Librarians should know better.

We can disagree without humiliating colleagues. We can raise ethical concerns without turning individuals into warnings. We can talk about AI without making it a loyalty test.

If we cannot model civil, nuanced disagreement about complex technologies, we should not be surprised when public discourse becomes brittle and polarized.

The question we need to ask ourselves

As librarians, are we truly serving our students and patrons if we choose to ignore AI?

Not if ignoring it means silence.

Not if ignoring it means opting out of instruction.

Not if ignoring it means leaving people to navigate powerful systems on their own.

Service has never meant approval. It has meant preparation.

If librarians step back, others step in. Platforms. Corporations. Influencers. None of them are accountable to educational ethics or intellectual freedom in the way libraries are.